AI incidents are rising faster than the laws meant to stop them. Here’s what frameworks exist, where they fail, and what needs to happen next.

The problem is already here — and accelerating

Before examining regulation, it’s worth confronting the scale of the problem it’s meant to solve. Reports of AI-related incidents rose 50% year-over-year from 2022 to 2024, and in the ten months leading to October 2025, incidents had already surpassed the full-year 2024 total, according to the AI Incident Database. Time More troubling, reports of malicious actors using AI — particularly to scam victims or spread disinformation — grew eightfold since 2022. Time

This is the backdrop against which governments are scrambling to build governance frameworks — frameworks that, frankly, are still catching up.

What frameworks exist today?

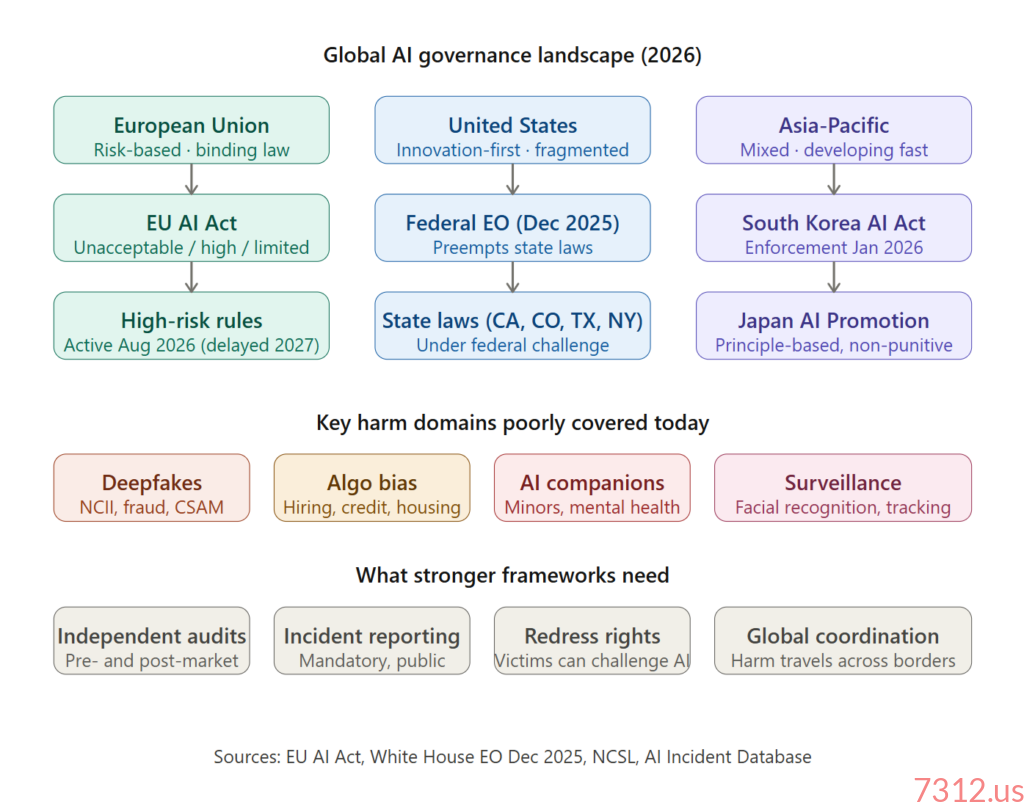

The European Union: The most ambitious attempt

The EU AI Act is the world’s most comprehensive binding AI law, built around a risk-tiered model. High-risk AI systems are authorized but subject to assessments before they can be placed on the EU market and to post-market monitoring obligations, including risk assessment and mitigation systems, use of high-quality datasets to minimize the risk of discriminatory outcomes, detailed documentation, and high levels of robustness and cybersecurity. Congress.gov

The transparency tier is also meaningful: users must be made aware when they interact with chatbots, and deployers of AI systems that generate or manipulate image, audio, or video content must make sure the content is identifiable. Congress.gov Most of these requirements took effect in August 2025.

However, the EU is already blinking. The compliance deadline for high-risk AI systems has effectively been paused until late 2027 or 2028, to allow time for technical standards to be finalised. AtomicMail Industry pressure and a desire to stay competitive with the US and China are taking their toll on the timetable.

The United States: A patchwork in political conflict

The US approach is defined more by its contradictions than its clarity. In 2025, 38 states adopted or enacted around 100 AI-related measures, according to the National Conference of State Legislatures. SIG States like California, Colorado, Texas, and New York moved to fill the federal vacuum with serious protections. California’s laws cover AI safety whistleblowers, training-data transparency, hiring discrimination, and algorithmic pricing. New York City’s Local Law 144 requires employers using automated employment decision systems to conduct bias audits.

But then came the federal counterpunch. On December 11, 2025, President Trump signed an executive order aiming to consolidate AI oversight at the federal level and counter the expanding patchwork of state AI rules. Gunderson Dettmer The administration established an “AI Litigation Task Force” within the DOJ to actively challenge states like California and Colorado, arguing their safety laws unlawfully restrict interstate commerce and innovation. White House

The result is a governance crisis: companies should maintain flexible compliance programs capable of adjusting to the shifting state and federal regulatory environment Kslaw — which is a polite way of saying nobody knows what the rules are.

Asia-Pacific: Fast movers, varied approaches

South Korea passed an AI Basic Act with enforcement scheduled for January 2026, aiming to become the first in Asia to have a comprehensive framework. AtomicMail Japan took a lighter touch: Japan’s Parliament approved the AI Promotion Act on May 28, 2025, following an “innovation-first” approach that empowers the government to issue warnings but lacks strict punitive measures, prioritizing development over strict safety guarantees. AtomicMail

Where frameworks are failing people right now

1. Deepfakes: A crisis without a sufficient legal response

This is perhaps the most urgent and concrete harm enabled by AI today. In a survey of more than 16,000 people across ten countries, 2.2% reported having been victims of deepfake pornography. Researchers have found that 96% of deepfakes are nonconsensual and that 99% of sexual deepfakes depict women. Scientific American

The financial stakes are also staggering. In 2024, criminals used deepfake video to impersonate executives from an engineering company on a live call, persuading an employee in Hong Kong to transfer roughly $25 million to accounts belonging to the criminals. Scientific American A recent report documents 487 deepfake attacks in the second quarter of 2025 alone, up 41% from the previous quarter, with approximately $347 million in losses in just three months. Scientific American

Children are the most vulnerable victims. The Internet Watch Foundation documented 210 web pages with AI-generated deepfakes of child sexual abuse in the first half of 2025 — a 400% increase over the same period in 2024. Scientific American UNICEF has been explicit: “Sexualised images of children generated or manipulated using AI tools are child sexual abuse material. Deepfake abuse is abuse, and there is nothing fake about the harm it causes.” UNICEF

The US did pass the bipartisan TAKE IT DOWN Act in 2025, which makes it a federal crime to publish or threaten to publish nonconsensual intimate images including deepfakes and gives platforms 48 hours to remove content. Scientific American This is meaningful progress — but it addresses only the distribution end, not the generation infrastructure.

Real example: In January 2025, X (formerly Twitter) faced lawsuits alleging the platform facilitated non-consensual deepfakes. After initially dismissing the controversy, the platform restricted image generation to paid subscribers and disabled the ability to edit images of real people into revealing clothing — but critics argued this highlighted a “safety-by-design” failure. Crescendo

2. Algorithmic bias: Discrimination laundered through code

AI systems are making or influencing life-altering decisions in hiring, lending, housing, and healthcare — often in ways that replicate or amplify existing discrimination. The opacity of these systems makes accountability nearly impossible under existing law.

Real example: France’s independent equality watchdog officially ruled that Facebook’s algorithm for distributing job advertisements is discriminatory and sexist. The investigation found the platform’s automated system showed certain job ads to audiences heavily skewed by gender even when advertisers had not specified any gender preference — for example, an ad for a bus driver was shown almost exclusively to men, while an ad for a nursery assistant was shown almost exclusively to women. Medium

Real example: New York City’s “MyCity” chatbot, designed to provide business owners with information about city policies, was found to be giving dangerously wrong information — for instance, incorrectly stating that NYC buildings are not required to accept Section 8 vouchers, even though landlords are legally required to do so. Medium This illustrates how AI-generated errors at the government level can directly harm vulnerable people seeking their legal rights.

3. AI companions and mental health: The most under-regulated frontier

States are moving quickly to regulate AI companions and therapeutic chatbots, focusing on safety, disclosures, and limits on AI-driven emotional or clinical support. Greenberg Traurig LLP But most of the world has no rules at all.

California lawmakers intensified scrutiny over AI companion chatbots following a tragic teen suicide in April 2025 linked to interactions with ChatGPT. Legislators want to ban emotionally manipulative chatbots for minors and introduce mandatory self-harm reporting features. Crescendo

Illinois now bars unlicensed AI systems from providing psychotherapy and restricts how licensed professionals may use AI: only for limited support functions, only with written disclosure and consent, and never to make therapeutic decisions, interact directly with clients, detect emotions, or generate treatment plans without human review. Greenberg Traurig LLP This is a model other states and countries should study.

4. AI-enabled surveillance: Privacy erosion by design

In December 2025, Amazon rolled out an AI-powered facial recognition feature (“Familiar Faces”) for Ring doorbells, allowing users to identify frequent visitors through stored facial profiles, reigniting privacy and surveillance concerns among lawmakers and digital rights groups. Crescendo

When a domestic abuser uses online tools to track or stalk a victim, when abusive pornographic deepfakes cause a victim to lose her job or access to her children, when online abuse of a young woman results in offline shaming and she drops out of school — these are examples of how easily and dangerously digital abuse spills into real life, UN News as activist Laura Bates has noted in the context of UN discussions on AI and gender-based violence.

What good governance actually requires

The gap between the harms described above and the frameworks in place is not primarily a technical problem — it’s a political will problem. Here are the structural elements that effective AI governance must include:

1. Mandatory pre-market risk assessment for high-risk applications. The EU AI Act’s model is right in principle: AI used in hiring, criminal justice, healthcare, credit, and education should be assessed for bias and harm before deployment, not after victims emerge. The US currently has no federal equivalent.

2. Independent auditing with real teeth. Some analysts have asserted a need for government-mandated oversight of AI conducted by professional auditors, which could create an industry of AI auditors to “deliver accountability for AI without disincentivizing innovation.” Congress.gov Self-certification and voluntary commitments have demonstrably failed — companies like X and Meta pledged better content moderation for years before deepfake crises forced their hands.

3. Mandatory incident reporting. The hope is that better incident tracking and analysis could help regulators avoid the missteps seen with social media and respond quickly to emerging harms. Time Aviation and pharmaceutical industries have long required incident reporting — AI should be no different.

4. A right to redress. Victims of AI-driven discrimination, deepfake abuse, or algorithmic error must have clear legal pathways to challenge decisions and seek remedies. Denmark’s proposed deepfake law — which would give victims removal and compensation rights — is a useful model. Without redress mechanisms, rights on paper mean nothing.

5. Global coordination. As of 2025, 117 countries report efforts to address digital violence, but progress remains fragmented and regulation often lags technological advances. AI and technology policy experts are calling for stronger global cooperation and more effective laws. UN News Deepfake factories, AI-enabled fraud networks, and surveillance tools don’t respect national borders. A company banned from operating in one country can simply serve its users from another.

6. Children-specific protections with enforcement. UNICEF notes that “too many AI models are not being developed with adequate safeguards” and that “the landscape remains uneven.” UNICEF Any country that does not have explicit, enforced prohibitions on AI-generated CSAM, mandatory safety-by-design requirements for children’s AI products, and strict limits on AI companion interactions with minors is leaving its most vulnerable citizens exposed.

The uncomfortable bottom line

The honest assessment is that no country has yet built a framework that is both comprehensive and enforceable. The EU has the most ambitious law but is already softening deadlines. The US is mired in a federal-versus-state conflict that could leave protections in legal limbo for years. Asia is moving quickly but unevenly.

Meanwhile, agentic AI — systems that act, not just answer — will stress-test “human oversight” rules in 2026, and privacy risks will keep growing as more sensitive work is fed into AI tools. AtomicMail The technology will not slow down to wait for governance to catch up.

The case for urgency is not hypothetical. Every month without mandatory auditing is another month of undiscovered algorithmic bias in hiring systems. Every year without federal deepfake law is another year that victims must fight removal battles platform by platform. Every missed implementation deadline for the EU AI Act is another window for high-risk systems to operate without oversight.

Effective AI governance doesn’t mean stifling innovation. It means ensuring that the people who bear the costs of AI failures — and they are disproportionately women, minorities, children, and economically vulnerable people — have the same legal protections they’d expect in any other domain of public life.

The technology is moving fast. The question is whether democratic societies will decide that protecting people from its harms is as important as accelerating its benefits.

Sources: EU AI Act documentation, White House Executive Order (Dec. 2025), AI Incident Database, TIME, Scientific American, UNICEF, UN Women, National Law Review, Gunderson Dettmer, Wilson Sonsini.

2 thoughts on “Who’s Watching the Watchers? The State of AI Governance in 2026”