We’ve officially entered the era of Vibe Coding.

If you’ve spent any time on “DevTwitter” or LinkedIn recently, you’ve seen the shift. We’re moving away from the era of grueling over-bracketed syntax and into a world where we describe a feeling, a flow, or a “vibe,” and an AI agent hands us a functional application.

But as the barrier to entry drops, the potential for chaos rises. Let’s break down what vibe coding actually is, why it’s awesome, and why it can be a security nightmare if you aren’t paying attention.

What Exactly is “Vibe Coding”?

At its core, vibe coding is the practice of using Large Language Models (LLMs) to generate entire blocks of code based on high-level natural language prompts.

Instead of writing a specific for loop, you tell the AI: “Make the login screen look like a 90s vaporware aesthetic and ensure it connects to my Supabase backend.” You aren’t coding the logic; you’re curating the intent. You are “vibing” with the model until the output matches your vision.

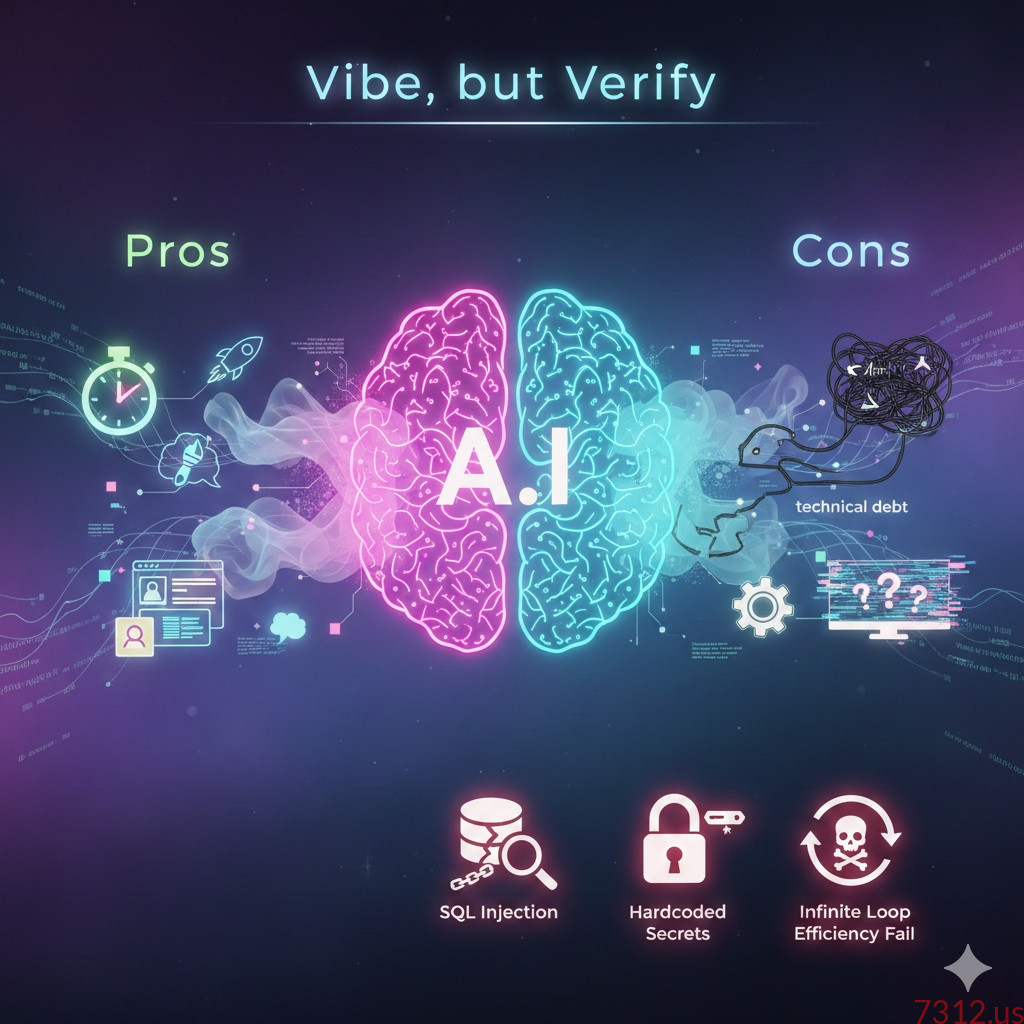

The Pros: Why We Love It

- Velocity: You can go from “idea” to “MVP” (Minimum Viable Product) in an afternoon.

- Accessibility: It opens the door for designers, product managers, and “non-coders” to build real tools.

- Context Switching: It handles the boring boilerplate (API setups, CSS resets) so you can focus on the big picture.

- Overcoming the Blank Page: It’s much easier to edit code than it is to write it from scratch.

The Cons: The Hidden Costs

- The “Black Box” Problem: If you didn’t write the code, do you actually understand how it works?

- Technical Debt: AI often prioritizes “working now” over “scalable later,” leading to messy, unoptimized codebases.

- Hallucinations: Sometimes the AI suggests libraries that don’t exist or uses deprecated methods that will break in a month.

When the Vibes Go Sour: Bad and Insecure Code

The biggest danger of vibe coding is blind trust. When you’re vibing, you might overlook critical security flaws that an LLM casually slipped into the script.

1. The SQL Injection Trap

If you ask an AI to “make a quick search bar for my users,” it might give you something like this:

Python

# BAD VIBE: Insecure and prone to SQL Injection

query = f"SELECT * FROM users WHERE username = '{user_input}'"

cursor.execute(query)

Why it’s bad: A malicious user could enter ' OR '1'='1 into your search bar and gain access to your entire database. A human coder knows to use parameterized queries, but a “vibing” AI might prioritize simplicity over safety.

2. Hardcoded Secrets

AI loves to help you “test” things quickly, which often leads to this:

JavaScript

// BAD VIBE: Leaking sensitive information

const stripe = require('stripe')('sk_test_51Mz...this_is_my_real_secret_key');

Why it’s bad: If you accidentally push this code to a public GitHub repository, bots will scrape that key in seconds, and your API account will be drained before you’ve even finished your coffee.

3. The “Infinite Loop” Efficiency Fail

Sometimes the vibes are aesthetically pleasing but computationally expensive:

JavaScript

// BAD VIBE: O(n^2) complexity on a simple filter

const users = getLargeUserList();

const activeUsers = users.filter(u => {

return otherList.find(item => item.id === u.id); // Nested loops!

});

Why it’s bad: This might work fine with 10 users during your “vibe session.” But once you have 10,000 users, your app will crawl to a halt because the AI chose a naive, inefficient approach.

The Verdict: Vibe, but Verify

Vibe coding is a superpower, but every superhero needs a reality check. The goal isn’t to stop using AI—it’s to become a Great Editor. Use the AI to generate the bulk, but put on your “senior dev hat” to audit the security, performance, and logic.

Don’t let the “vibe” distract you from the “verify.”