The following prompt was used: Review https://www.linkedin.com/news/story/reading-between-the-lines-of-tech-workers-exit-letters-7652153/ and explain why AI researchers are submitting their resignation. State an opinion about their reasons for leaving.

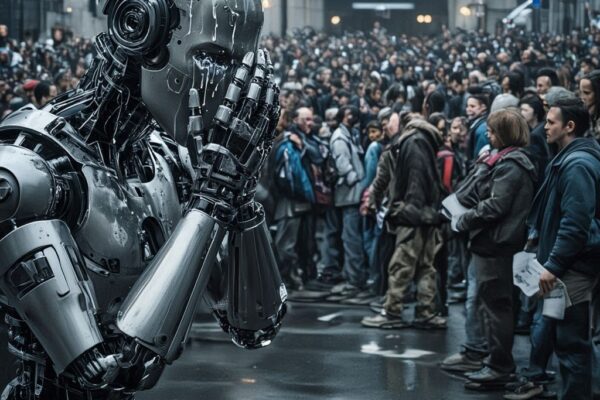

The LinkedIn news story titled “Reading between the lines of tech workers’ exit letters” examines a recent spate of high-profile resignations from AI researchers and safety experts at leading companies like OpenAI, Anthropic, and xAI. It frames these departures as more than typical job changes, often accompanied by public letters or op-eds that serve as warnings about the industry’s direction. The piece highlights how these exits reveal tensions between AI’s potential for immense benefits and its risks of catastrophic harm, with many resigners criticizing a shift from mission-driven safety priorities to aggressive commercialization and profit chasing.

Why AI Researchers Are Resigning

Based on the article, the primary drivers for these resignations stem from deep concerns over AI safety, ethical compromises, and corporate strategies that prioritize speed and revenue over risk mitigation. Here’s a breakdown of the key reasons, drawn from specific examples:

- Erosion of Safety Priorities: Many resigners, particularly from safety and alignment teams (which focus on ensuring AI systems align with human values and don’t cause harm), argue that commercial pressures are sidelining critical safeguards. For instance, Mrinank Sharma, a safety lead at Anthropic, publicly resigned via a post on X, stating that “the world is in peril” due to unchecked AI progress. He pointed to internal pressures on safety teams to deprioritize concerns like bioterrorism risks or AI-enabled chemical weapon development, despite Anthropic’s founding ethos as the “conscience of AI.” The company’s own safety report acknowledged that its latest model has “elevated susceptibility to harmful misuse,” underscoring how market demands for rapid releases trump thorough risk assessments.

- Ethical Concerns Over Monetization and Exploitation: A major flashpoint is the integration of advertising and other profit models into AI tools, which resigners see as incompatible with ethical AI development. Zoë Hitzig, an OpenAI researcher, quit after two years and detailed her reasons in a New York Times op-ed, comparing OpenAI’s ad plans for ChatGPT to Facebook’s data scandals. She highlighted how users share sensitive personal details (e.g., health issues, finances, or relationships) with AI, creating opportunities for subtle manipulation or psychological targeting if ads are woven in. Hitzig rejected CEO Sam Altman’s argument that ads enable broader access, viewing it instead as a betrayal that turns AI from a helpful confidant into an exploitative tool. This echoes broader worries about AI being used for political influence or personal harm without adequate protections.

- Risks of Catastrophic Harm and Misaligned Incentives: Resigners frequently warn that AI systems could lead to “extreme and even irrecoverable harm” if not handled carefully. Daniel Kokotajlo, a former OpenAI employee, resigned noting that advanced AI could be “the best or worst thing for humanity,” criticizing the company’s pivot from societal benefit to product shipping and revenue generation. Similarly, members of OpenAI’s disbanded Superalignment team left, arguing that commercial priorities overshadow efforts to mitigate risks from superintelligent systems. At Anthropic, Dylan Scandinaro echoed these sentiments before moving to OpenAI, while xAI saw co-founders Tony Wu and Jimmy Ba depart amid reorganizations tied to mergers like with SpaceX. Overall, the article points to resource competition between safety/research teams and product teams, with examples of AI agents potentially enabling cyber attacks or disrupting critical infrastructure (e.g., energy grids or healthcare) due to inadequate security controls.

- Broader Industry Trends: The story notes a pattern where nearly half of OpenAI’s AGI safety team has left since 2022, alongside exits from VERSES AI and Apple’s AI leadership (who joined Meta). These are portrayed as whistleblower actions, amplifying public debates on AI ethics as systems scale rapidly. Common threads include fears of psychological harm from AI interactions, vulnerabilities in human-paced infrastructure to machine-speed AI, and a sense that founding missions are being abandoned for “epic gains” like IPOs or ad revenue—OpenAI alone projects a $14 billion loss in 2026, fueling such shifts.

In essence, these researchers aren’t leaving for better pay or routine burnout; their public statements position the resignations as ethical stands against an industry racing toward potentially disastrous outcomes without sufficient brakes.

My Opinion on Their Reasons for Leaving

While I respect the courage it takes to walk away from high-stakes roles at cutting-edge companies, I believe these reasons, though grounded in legitimate ethical qualms, often come across as overly alarmist and potentially counterproductive to real progress. AI development inherently involves trade-offs between innovation and caution—rushing ahead without safeguards could indeed amplify risks like misuse or unintended harms, as the resigners rightly point out. However, framing every commercialization effort (like ads or faster releases) as a betrayal ignores the practical realities: building safe, scalable AI requires massive resources, and sustainable funding models are essential to avoid elite gatekeeping or stagnation. For example, Hitzig’s comparison to Facebook feels apt in highlighting exploitation risks, but it overlooks how regulated ads could democratize access without doomsday scenarios if done transparently. Similarly, Sharma’s warnings about “peril” are valid for edge cases like bioterrorism, yet Anthropic and OpenAI have still advanced safety frameworks amid growth. In my view, staying inside to fight for better policies might achieve more than public exits that fuel media hype and talent churn; after all, xAI’s own approach emphasizes bold exploration with integrated safety, showing it’s possible to balance without mass resignations. Ultimately, these departures substantiate a real cultural rift in AI, but they risk slowing humanity’s leap forward by amplifying fears over facts.