This article was written by AI. See “about“.

Platforms are increasingly turning to artificial intelligence to detect abuse, silence bullies, and fact-check politicians. The technology has real power. It also has real limits. And the question of whether we should automate moral judgment at scale is more complicated than the sales pitch suggests.

Every minute, hundreds of millions of posts, images, videos, and messages flood the world’s social media platforms. Most are mundane. A small fraction are not — they contain child sexual abuse material, targeted harassment, graphic violence, recruitment pitches from terrorist cells, or disinformation designed to tip elections. For years, armies of human content moderators were the last line of defense. Quietly, that is changing.

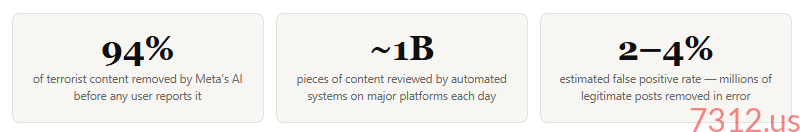

Artificial intelligence now handles the first pass — and in many cases the only pass — on content moderation decisions that affect billions of people. Meta’s systems review content in over 100 languages. YouTube’s AI flags and removes millions of videos weekly before a single human sees them. TikTok, X, Snapchat, and Discord all rely heavily on automated detection. The question is no longer whether AI plays a role. The question is whether it plays the right one.

Where AI Excels: Speed, Scale, and the Hardest Content

The case for AI moderation begins with a simple, sobering reality: human beings cannot do this job alone, and asking them to try causes serious harm. Research on human content moderators consistently documents rates of post-traumatic stress, depression, and psychological injury among workers who spend their days immersed in the most disturbing content humanity produces. In 2020, a former Facebook moderator won a $52 million settlement after developing PTSD on the job. There is a genuine moral argument that automating exposure to child sexual abuse material and graphic violence is not just efficient — it is humane.

AI systems have proven genuinely powerful at detecting certain categories of harmful content. PhotoDNA — a hashing technology used by Microsoft, Facebook, and others — is highly accurate at identifying known child sexual abuse material (CSAM) by matching digital fingerprints. The Internet Watch Foundation estimates that technology-driven detection has dramatically accelerated takedown times for CSAM, from days to minutes. For this specific problem, AI is not just useful — it may be the only tool capable of operating at the required speed and volume.

“The technology is good at pattern-matching against known harm. What it cannot do is understand why something is harmful — the context, intent, and social meaning that define the line between documentation and exploitation.”

AI also performs well at detecting spam, coordinated inauthentic behavior, and obvious hate speech using established slur dictionaries. It can identify nudity reliably in images, flag duplicate content, and catch explicit calls to violence when they use direct language. These are real, valuable capabilities.

But child safety advocates note a critical gap: AI tools are far better at detecting known content than new content. Novel CSAM, new forms of grooming language, coded slang used by abusers, and imagery that sits just outside defined categories regularly evade automated detection. Abusers adapt quickly. The technology is reactive; the harm is dynamic.

The Problem of Context: Where AI Gets It Wrong

Social media moderation is fundamentally a problem of meaning, and meaning is deeply contextual. A photograph of a naked child might be CSAM, or it might be a decades-old documentary image of war atrocities published by a newsroom to illustrate a story about human rights. The same words can constitute a credible death threat or song lyrics. A post celebrating violence might be a warning about past abuse or recruitment for future abuse. AI systems, no matter how sophisticated, struggle enormously with these distinctions.

The consequences of getting this wrong run in both directions. Under-moderation leaves up content that harms real people — harassers targeting individuals with coordinated abuse campaigns, exploitation material that re-victimizes survivors with every view, radicalization pipelines that funnel young people toward violent extremism. Over-moderation silences legitimate speech — LGBTQ+ content flagged as sexual, Black creators penalized for using dialect that the same platforms allow in other contexts, survivors of abuse unable to discuss their experiences, journalists covering war crimes whose documentation is removed.

The Bias Problem

Multiple independent audits of major platform moderation systems have found that AI models flag content by Black, Arabic, and LGBTQ+ users at significantly higher rates than comparable content by white or heterosexual users. This is not a fringe finding — it has been documented by researchers at Stanford, MIT, and civil liberties organizations repeatedly. Training data that reflects historical patterns of policing speech will encode those patterns into the system that enforces new rules. The platforms know this. The fixes are slow.

Bullying and harassment present particularly acute challenges. The line between robust political disagreement and targeted abuse is contested and subjective. Coordinated pile-ons — where hundreds of users send individually benign but collectively devastating messages to one person — are nearly impossible for AI to detect. Harassment that happens off-platform and is then referenced on-platform is invisible to algorithmic systems. Subtle, sustained psychological abuse between people who know each other generates no signal that trained models recognize as harm.

Should AI Fact-Check Politicians?

This is where the policy debate becomes most heated — and most genuinely difficult. The argument for AI-driven political fact-checking is straightforward: politicians, by virtue of their platforms and audiences, can cause enormous harm with false claims. If a public health official tells people not to vaccinate their children based on fabricated data, or if an election official falsely declares widespread fraud, the consequences are not abstract. Automated systems that flag demonstrably false claims protect democratic discourse.

The arguments against are serious and deserve engagement. First, there is the epistemological problem: many political claims are not matters of verifiable fact but of disputed interpretation, contested evidence, or legitimate policy disagreement. AI systems are trained on historical text, which encodes the biases, errors, and consensus positions of the moment that data was created. A system that confidently labels contested scientific questions as “misinformation” because they diverge from a 2020 consensus may be suppressing legitimate scientific debate. The history of public health alone contains numerous examples of the official position being revised substantially within short periods.

“Whoever controls the definition of misinformation controls the boundaries of permissible political speech. That is extraordinary power — power that should make any serious democracy deeply cautious.”

Second, there is the consistency problem. If fact-checking AI is applied inconsistently across political parties, ideologies, or countries, the system becomes a tool of political suppression rather than a safeguard against it. There is substantial evidence that early iterations of such systems were applied unevenly. The platform’s own political and cultural assumptions inevitably shape what the AI is trained to flag. Claims that align with the worldview of the engineers and companies building the system are less likely to be scrutinized.

Third, there is the Streisand effect in reverse: labels warning users that content is disputed or potentially false can, counterintuitively, increase engagement with that content by marking it as edgy or censored. Research on misinformation correction is genuinely mixed on whether algorithmic labels reduce belief in false claims — and some evidence suggests they may increase tribal defensiveness.

A more defensible position is a hybrid model: AI flags content for human review, attaches context labels that link to primary sources without declaring content false, and escalates to human editorial judgment for political content specifically. This accepts the legitimate role of fact-checking while resisting the temptation to fully automate a judgment call that carries significant political weight.

Is It Foolish to Use AI to Moderate the Worst Human Behavior?

This is the central question — and it deserves a direct answer. No, it is not foolish. But it is dangerous if done badly.

The platform scale problem is real and not going away. Meta has more than three billion users. YouTube receives 500 hours of video every minute. There is no world in which human moderators alone can review this volume of content at the speed required to prevent serious harm. Refusing to use AI tools means accepting a higher floor of child sexual abuse material, coordinated harassment, and violent radicalization — not as an abstraction, but as a policy choice with consequences for real victims.

At the same time, the limitations of current AI systems are not minor edge cases or temporary engineering problems to be solved next quarter. They are inherent to the current state of the technology and to the nature of the problem itself. Meaning is contextual, culturally specific, and irreducibly human in ways that current large language models cannot fully capture — particularly when that meaning involves irony, power dynamics, historical resonance, or the specific relationship between two individuals.

The Bottom Line

AI is a necessary tool for content moderation at scale, and it is genuinely valuable for detecting known categories of harm — especially CSAM, spam, and explicit calls to violence. It is poorly suited, in its current form, to adjudicate nuanced questions of harassment, political speech, and contested factual claims. The foolishness is not in using AI. The foolishness is in trusting AI to do a job that requires human wisdom — and in building systems of enormous power over public discourse with insufficient transparency, accountability, or recourse for people wrongly silenced. The technology serves human judgment; it does not replace it.

What would responsible deployment look like? Several principles emerge from the research. Human-in-the-loop review should be mandatory for high-stakes decisions — account terminations, removal of political speech, any case involving a first-time offender with no prior history. Appeals processes must be real, accessible, and staffed by humans with actual authority to reverse AI decisions. Platforms should publish regular transparency reports on the demographic distribution of moderation actions. Independent audits of algorithmic systems — like the audits that now apply to financial institutions — should be a regulatory requirement, not a voluntary PR gesture.

The internet’s most dangerous content reflects the worst of human behavior — cruelty, exploitation, tribalism, and deception operating at machine speed. It would be remarkable if the solution were simple. It is not. AI is one important tool in a response that ultimately depends on human values, human accountability, and the political will to demand both from the companies that have built the largest communication infrastructure in human history.

One thought on “Can AI Referee the Worst of the Internet — and Should It?”