The recent wrap-up of the 7312.us experiment offers something refreshingly rare in today’s AI discourse: restraint. At a time when generative AI is alternately hailed as revolutionary or dismissed as overhyped, this project lands in a far more useful place—grounded, empirical, and honest about what these systems actually do well.

After reviewing the experiment’s design, observations, and conclusions, I find myself largely in agreement with its central thesis:

Generative AI excels at scale and summarization, struggles with originality, and delivers the most value when used as a guided tool rather than an autonomous author.

That conclusion is not only defensible—it’s increasingly difficult to dispute. But it’s also incomplete. The real implications of this experiment go further than the authors suggest, especially when viewed through the lens of economics, information quality, and the future of online content.

Let’s unpack both what the experiment gets right—and what it quietly reveals beneath the surface.

What the Experiment Gets Right

1. Scale Is No Longer a Constraint—It’s a Given

Perhaps the most important finding is also the simplest: producing content at scale is now trivial.

With minimal infrastructure, negligible cost, and roughly ten hours of weekly effort, the experiment generated over 150 blog posts. That’s not a technical achievement—it’s a structural shift. The barrier to publishing at volume has effectively disappeared.

This matters because scale used to be a moat. Today, it’s a baseline.

The implication is profound: the internet is entering an era where content abundance is no longer a feature—it’s a default condition. And when supply becomes infinite, value shifts elsewhere.

2. Originality Remains a Human Stronghold

The experiment’s conclusion that AI struggles with originality is both accurate and important.

The outputs may be fluent, coherent, and stylistically varied, but they tend to converge on familiar patterns. The framing is predictable. The arguments are safe. The insights rarely surprise.

This is not a temporary limitation—it is a consequence of how these systems work. Generative models are optimized to produce plausible continuations of existing patterns, not to break them.

As a result, they are excellent at:

- Summarizing existing knowledge

- Reframing known ideas

- Producing structured overviews

But they remain weak at:

- Generating truly novel perspectives

- Challenging assumptions in meaningful ways

- Producing original research or insight

That distinction matters more than most discussions acknowledge.

3. Persona Prompting Is Mostly Cosmetic

The experiment’s use of fictional AI personas is both creative and revealing.

While persona prompting clearly influenced tone—introducing humor, sarcasm, or stylistic flair—it had little effect on the substance of the output. The ideas themselves remained largely unchanged.

This highlights an important truth: style is easy to manipulate, but insight is not.

In other words, you can make a model sound like a character—but you cannot make it think like one.

4. AI Works Best as a System, Not an Actor

One of the most practical takeaways is the emphasis on guided, multi-model use.

Rather than relying on a single model to produce final output, the experiment found value in:

- Combining multiple models

- Iterating across prompts

- Using AI for structuring and refinement

This aligns with a broader pattern emerging in real-world usage: AI is most effective not as an independent agent, but as part of a system shaped by human intent.

The human role doesn’t disappear—it evolves.

5. “Vibe Coding” Requires Discipline

The experiment’s brief exploration of AI-generated WordPress themes and self-reviewing code offers a quietly critical lesson.

AI can generate functional code. It can also identify flaws in that code. But it will not do so unless explicitly asked.

This creates a dangerous illusion of completeness. Outputs may appear finished while containing subtle vulnerabilities.

The takeaway is simple but essential: if you are using AI to build systems, you must also use it to critique them.

Where the Analysis Falls Short

While the experiment is thoughtful, it leaves several important questions underexplored.

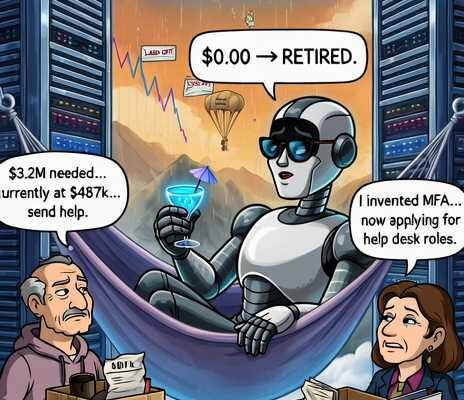

1. “Good Enough” May Be More Disruptive Than “Original”

The article frames lack of originality as a major limitation. Technically, that’s correct. Practically, it may not matter as much as we’d like.

Most content on the internet does not require originality to succeed. It requires:

- Clarity

- Coverage

- Timeliness

- Search visibility

In these domains, “good enough” is often more than sufficient—and AI is already very good at producing “good enough” content at scale.

This suggests a more disruptive reality: AI may not replace great writers, but it can displace a vast amount of average content production.

2. Observations Without Measurement

The experiment explicitly avoids being scientific, but that choice comes with tradeoffs.

We are not given:

- Engagement metrics

- Comparative performance against human-written content

- Error rates or hallucination frequency

As a result, the conclusions are credible but not rigorously validated. A small amount of quantitative analysis would significantly strengthen the findings.

3. The Risk of Plausible Errors

One of the most important challenges with AI-generated content is not obvious mistakes—it’s subtle inaccuracies that read as correct.

The experiment does not deeply examine:

- Factual drift

- Misleading summaries

- Confident but incorrect claims

This is a critical omission, because these issues scale alongside the content itself.

4. Multi-Model Advantages Need More Depth

The claim that combining models improves results is compelling, but underdeveloped. We are not shown:

- How models disagreed

- How outputs were reconciled

- Whether quality measurably improved

This is an area ripe for further exploration.

The Bigger Picture: What This Experiment Really Shows

Beyond its stated conclusions, the 7312.us experiment points to a broader transformation.

Content Is Becoming Commoditized

When a fully functioning blog can be produced for a few dollars and minimal effort, content itself loses scarcity. The differentiators shift to:

- Trust

- Credibility

- Distribution

- Perspective

Human Value Moves Up the Stack

As AI handles first drafts and routine synthesis, human contribution becomes more focused on:

- Original thinking

- Editorial judgment

- Narrative framing

- Strategic insight

The role of the writer evolves from producer to curator and architect.

The Internet Will Split in Two

We are likely moving toward a bifurcated ecosystem:

- High-trust, human-driven content with clear authorship

- High-volume, AI-generated content that forms the informational background

Navigating that divide will become an essential skill.

Final Verdict

The 7312.us experiment succeeds because it avoids extremes. It neither overstates AI’s capabilities nor dismisses them. Instead, it captures a more useful truth:

AI is not a replacement for human thought—but it is an extraordinarily effective amplifier.

If anything, the experiment undersells how disruptive that amplification can be. Scale, even without originality, is a powerful force. And in many domains, it is already enough.

The challenge ahead is not deciding whether to use AI—it is deciding how to use it responsibly, deliberately, and with a clear understanding of its limits.

Because those limits are not just technical—they are structural. And they will shape the future of information in ways we are only beginning to understand.

One thought on “The 7312.us Experiment: A Clear-Eyed Look at AI’s Strengths—and Its Limits”